AI anxiety is not about the technology - it is about losing control

Employees are not afraid of AI algorithms. They are afraid of losing agency over their work, their relevance, and their future. Here is how to fix it.

Employees are not afraid of AI algorithms. They are afraid of losing agency over their work, their relevance, and their future. Here is how to fix it.

Most AI steering committees fail because they are designed to discuss, not decide. They become debate clubs that slow down implementation rather than governance bodies that accelerate it. The difference between effective and ineffective committees is not expertise - it is authority and the power to make binding decisions.

Most generative AI products have negative unit economics and lose money on every user. Here is the uncomfortable reality about AI product profitability and what it takes to build sustainable businesses.

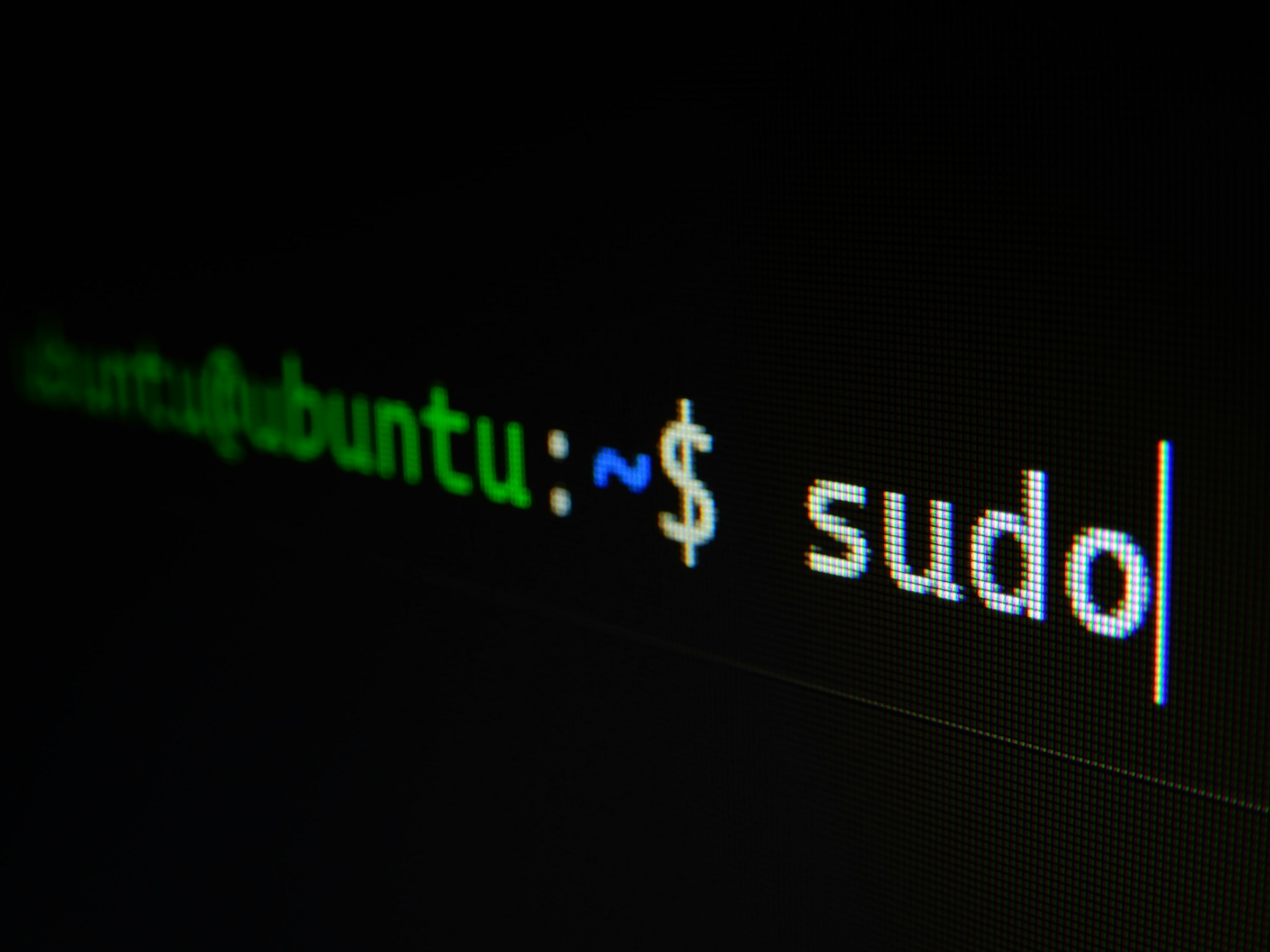

Compliance platforms charge thousands annually for what is essentially organisation software. We moved to a Git repository, Google Drive for auditor access, and AI for the tedious work. The only cheque we write now goes to our CPA firm for the actual audit.

Build a production-grade personal website with zero hosting costs. No DevOps experience needed when Claude Code handles the setup, configuration, and deployment.

Forward deployed engineers bridge the gap between software platforms and customer reality. The role fails catastrophically when filled by people without genuine coding skills.

Most AI centers of excellence become permanent bureaucratic bottlenecks that slow adoption instead of accelerating it. The smart ones are designed to dissolve as AI capability spreads throughout your organization, measuring success by how quickly they become unnecessary.

AI systems fail gradually and partially, not in clear binary states like traditional software. The model gives a plausible answer missing important context, latency spikes but stays under timeout limits, outputs degrade invisibly. Your error handling must match this complexity.

Most AI strategies are elaborate 50-slide performances designed to impress investors and boards, while the boring operational work that creates actual business value gets completely ignored. A hard truth: genuine success happens in operations, not in innovation theater.

Most teams measure AI wrong - tracking model accuracy instead of business outcomes. This complete guide shows you the four measurement layers that matter, how to design dashboards that drive decisions, and why your infrastructure choice determines what you can measure.

Most AI budgets focus on software and infrastructure while ignoring the massive human time investment that drives costs. 85% of organizations misestimate AI project costs because they do not count employee hours, integration work, productivity losses, and opportunity costs. Here is a framework for calculating the true total cost of AI implementation.

Most AI tools will not exist in three years. The economics are brutal: point solutions become platform features overnight, and startups burn through cash twice as fast as startups did a decade ago. Here is how to spot which ones survive and avoid betting your operations on doomed solutions.

Most vendor comparisons obsess over model capabilities while ignoring what actually determines success: whether they will pick up the phone when your implementation breaks at 3am. With more than 80% of AI projects failing and 85% of companies missing AI cost forecasts by more than 10%, choosing the right partner matters more than choosing the best model.

Companies waste millions choosing build or buy based on cost spreadsheets and technical capabilities. The real decision is whether your middle managers understand AI well enough to actually use whatever you build or buy. Without that understanding, both choices fail at the same rate.

Migrated to Azure OpenAI for compliance, then back to OpenAI for innovation speed. Azure is insurance, not improvement. Here is how to choose.

The real career threat is not AI replacing you - it is being replaced by someone who learned to work with AI while you did not. This shift forces millions into new roles by 2030. Here is how to build career resilience through human-AI collaboration and position yourself for what comes next.

Chain-of-thought is debugging for AI decisions. Make reasoning transparent, catch errors before they matter, and build trust with teams who need to understand why AI recommended what it did.

Mid-size companies spend tens of thousands annually on workflow tools that fragment their operations. Claude Artifacts offers a different approach - unified AI-powered workspace that handles what used to require multiple subscriptions.

Your codebase sits at 40% test coverage, three people understand your critical systems, and hiring QA engineers costs more than your tooling budget. Claude Code test generation generates thorough tests that catch edge cases developers miss, serving as both validation and living documentation for teams too small for dedicated QA but too large to skip testing entirely.

University of Chicago research reveals people learn less from their own failures than successes due to ego protection. The solution is not avoiding mistakes but designing AI training simulations that create safe environments where controlled failure accelerates learning without the psychological cost.

Choosing between knowledge graphs and vector databases is a false choice. Knowledge graphs excel at structured relationships and reasoning, while vector databases handle semantic similarity and unstructured data. The companies getting real value from AI are using both together, and here is how to decide which approach fits your specific problem.

Stop thinking 90 days will complete your COBOL to cloud migration. Use that time instead to prove legacy modernization can work, build organizational confidence, and create momentum for the challenging multi-year transformation ahead. That is how successful modernizations actually begin.

AI frameworks promise to simplify development, but they often add more complexity than they remove through abstraction layers and dependency bloat. LangChain offers flexibility at the cost of overhead, LlamaIndex excels at data connection, while direct API implementation provides clarity and control. Here is when each approach actually makes sense for your team.

Most companies waste millions on failed replacement projects when AI augmentation could modernize legacy systems faster and cheaper without business disruption. Here is how mid-size companies can build intelligent capabilities on top of existing systems instead of expensive rip-and-replace approaches.

Most manufacturers chase predictive maintenance for their first AI project when quality control delivers results ten times faster. Computer vision catches defects humans miss, pays back in months not years, and needs cameras instead of facility-wide sensor networks. Start with quality control and measure real business outcomes immediately.

Multi-agent AI systems promise specialized intelligence but deliver exponential complexity. Communication overhead grows as n squared, costs multiply, and failure rates double. Most mid-size companies need one capable agent, not coordinated swarms.

Combining text, vision, and speech sounds powerful until you realize most implementations stack capabilities without enriching context. Real value comes from modalities that inform each other.

Open source AI models look free until you add infrastructure, staffing, and maintenance. For most mid-size companies, proprietary solutions cost less overall.

Why employees resist AI is not about technology, it is about fear of becoming irrelevant. Most companies treat workplace AI resistance as a training problem when it is an identity crisis. Here is what works to address real concerns.

After spending on digital transformation, most companies discover they have earned the right to transform again. Here is what happens when consultants leave and why continuous evolution beats episodic overhauls.